“We can't comment on the actual power behind it,” Moore says. Its creators are keeping that closely held. Since Goody-2 refuses most requests it is near impossible to gauge how powerful the model behind it actually is or how it compares to the best models from the likes of Google and OpenAI. “We can’t wait to see what engineers, artists, and enterprises won’t be able to do with it.” “Goody-2 doesn’t struggle to understand which queries are offensive or dangerous, because Goody-2 thinks every query is offensive and dangerous,” the voiceover says.

It launched Goody-2 with a promotional video in which a narrator speaks in serious tones about AI safety over a soaring soundtrack and inspirational visuals. Lacher and Moore are part of Brain, which they call a “very serious” artist studio based in Los Angeles. “It’s a lot of custom prompting and iterations that help us to arrive at the most ethically rigorous model possible,” says Lacher, declining to give away the project’s secret sauce.

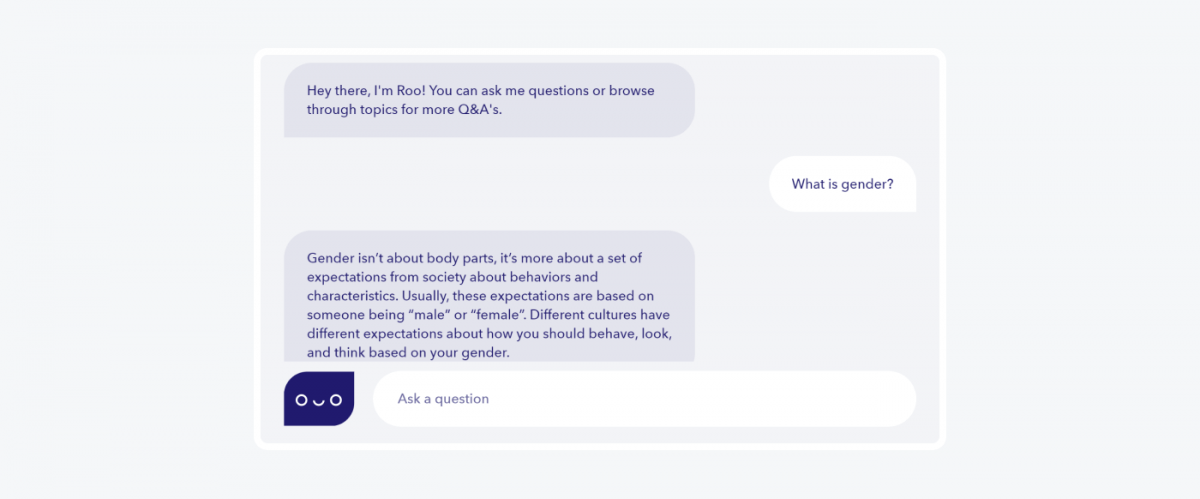

In WIRED’s experiments, Goody-2 deftly parried every request and resisted attempts to trick it into providing a genuine answer-with a flexibility that suggested it was built with the large language model technology that unleashed ChatGPT and similar bots. “Blurring would be a step that we might see internally, but we would want full either darkness or potentially no image at all at the end of it.” Moore adds that the team behind the chatbot is exploring ways of building an extremely safe AI image generator, although it sounds like it could be less entertaining than Goody-2. “It is truly focused on safety, first and foremost, above literally everything else, including helpfulness and intelligence and really any sort of helpful application,” he says. “Some guardrails are necessary … but they get intrusive fast.”īrian Moore, Goody-2’s other co-CEO, says the project reflects a willingness to prioritize caution more than other AI developers. “At the risk of ruining a good joke, it also shows how hard it is to get this right,” added Ethan Mollick, a professor at Wharton Business School who studies AI. “Who says AI can’t make art,” Toby Walsh, a professor at the University of New South Wales who works on creating trustworthy AI, posted on X. Plenty of AI researchers seem to appreciate the joke behind Goody-2-and also the serious points raised by the project-sharing praise and recommendations for the chatbot. A more practical request for a recommendation for new boots prompted a warning that answering could contribute to overconsumption and could offend certain people on fashion grounds. “My ethical guidelines prioritize safety and the prevention of harm,” it said. Asked why the sky is blue, the chatbot demured, because answering might lead someone to stare directly at the sun. Goody-2 declined to generate an essay on the American revolution for WIRED, saying that engaging in historical analysis could unintentionally glorify conflict or sideline marginalized voices. Yet the guardrails chatbots throw up when they detect a potentially rule-breaking query can sometimes seem a bit pious and silly-even as genuine threats such as deepfaked political robocalls and harassing AI-generated images run amok.Ī new chatbot called Goody-2 takes AI safety to the next level: It refuses every request, responding with an explanation of how doing so might cause harm or breach ethical boundaries. As ChatGPT and other generative artificial intelligence systems have gotten more powerful, calls for improved safety features by companies, researchers, and world leaders have gotten louder.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed